By Ranae Peterson

•

May 22, 2026

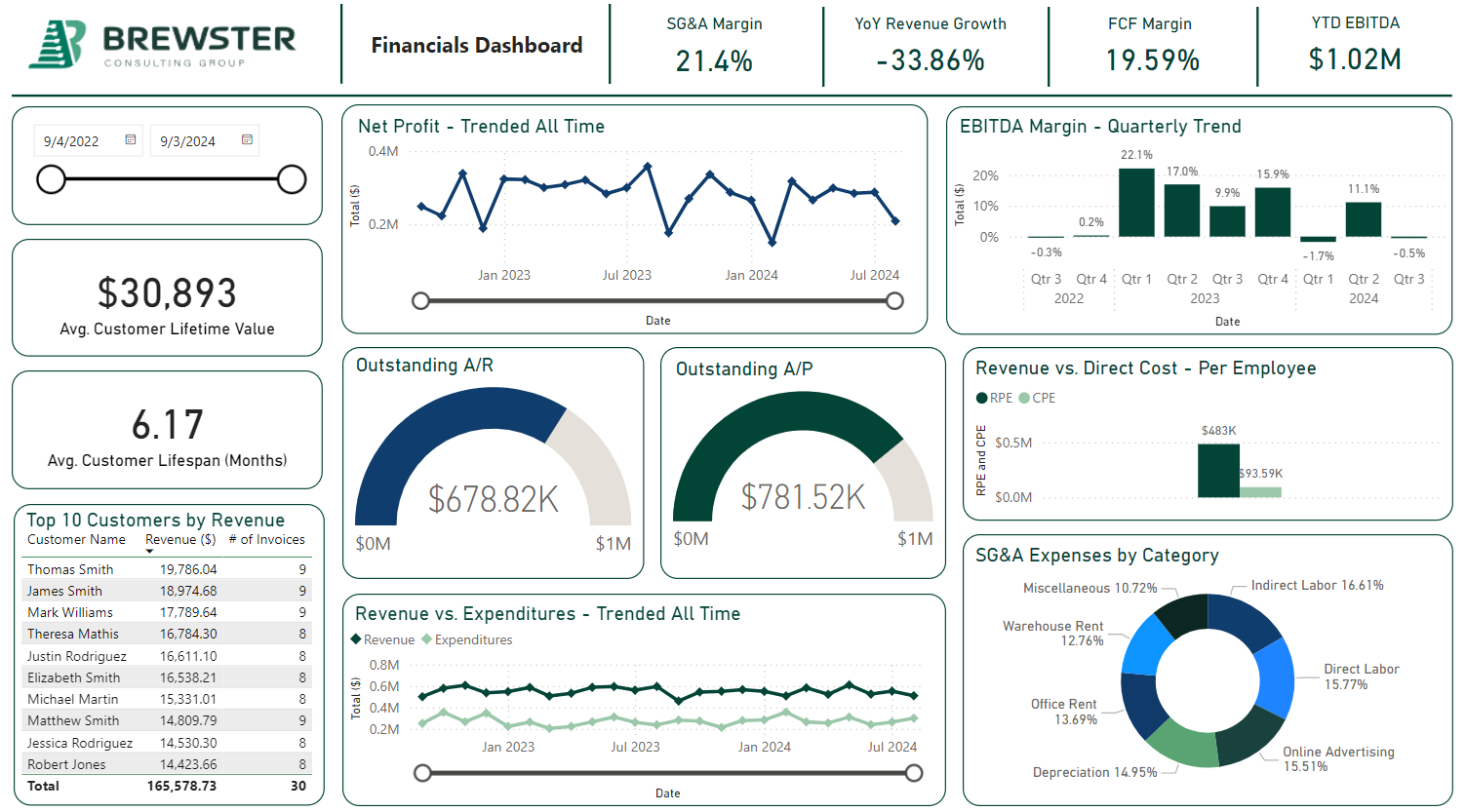

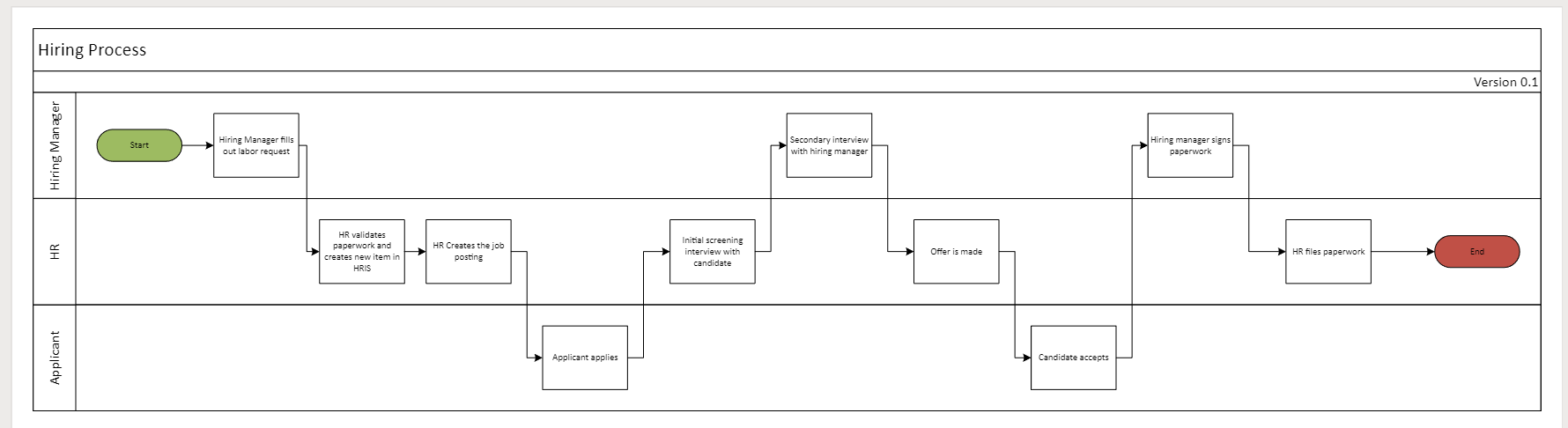

Artificial Intelligence has rapidly transitioned from a long-term innovation topic to an immediate strategic priority. In today’s environment, executive teams are no longer asking if they should adopt AI; they are being pressured to define how quickly they can implement it and where it will deliver measurable impact. Boardrooms are filled with discussion about automation, productivity gains, and competitive differentiation. Investors are evaluating AI maturity as a signal of future readiness. Competitors are announcing new capabilities at an accelerating pace. The pressure to act is no longer subtle; it is systemic. And in many ways, that pressure is justified. AI has the potential to fundamentally reshape how organizations operate. It can reduce the need for manual processes , enhance decision-making, improve customer responsiveness, and unlock entirely new business models. For executives responsible for growth, efficiency, and long-term viability, ignoring AI is not a viable option. However, amid this urgency, critical misalignment is emerging. Organizations are increasingly treating AI automation as a starting point rather than a scaling mechanism. In the rush to modernize, many are bypassing one of the most essential steps in any transformation effort: process improvement. This shift may appear subtle, but its implications are significant. Process improvement historically served as the foundation for operational excellence, ensuring that workflows are efficient, repeatable, and aligned with business objectives. It is the discipline that identifies inefficiencies, eliminates waste, and creates the structure necessary for sustainable performance. AI, by contrast, is an accelerator. It amplifies what already exists. When these roles are misunderstood or reversed, organizations risk building advanced capabilities on unstable ground. Instead of enabling transformation, AI initiatives begin to expose and often intensify existing weaknesses. What makes this particularly concerning is that many organizations are aware of these foundational gaps and are proceeding regardless. The desire to capture the perceived benefits of AI (speed, scale, efficiency, etc.) can overshadow the operational reality beneath the surface. The result is a growing pattern across industries: AI initiatives that stall, underperform, or fail entirely don’t necessarily do so due to a lack of technology capability, it is typically because the environment in which it is deployed is not prepared to support it. This is not an argument against AI. Quite the opposite. It is a call for a more disciplined and strategic approach to AI implementation . One that recognizes that the success of AI is not determined by the sophistication of the tools, but by the strength of the foundation they are built upon. The Rush to Automate – Without the Foundation AI promises speed, scale, and efficiency. For executives tasked with driving growth and innovation, that promise is compelling. Automation can reduce manual effort, improve responsiveness, and unlock new capabilities. However, what is increasingly evident across industries is that organizations are rushing toward automation without first addressing the underlying processes that automation is meant to enhance. That is not just anecdotal; it is systemic. According to the World Economic Forum , 55% of companies report that outdated systems and processes are their largest barrier to AI implementation, yet many of these same organizations continue to push forward with AI initiatives anyway ( World Economic Forum, “Why AI Fails Without Streamlined Processes” ). This statistic highlights a critical contradiction: Executives understand operational gaps exist in their organizations, but the urgency to adopt AI often overrides the discipline required to fix them first. Why AI Initiatives Fail Without Process Improvement The high failure rate of AI initiatives is not simply the result of immature technology or unrealistic expectations; it is often the result of insufficient preparation. Estimates suggest that as many as 80% of AI initiatives fail , particularly when factoring in technical, compliance, and adoption challenges, as highlighted in the same World Economic Forum discussion ( World Economic Forum, “Why AI Fails Without Streamlined Processes” ). While these failures are frequently framed as issues of implementation complexity, the underlying causes are far more fundamental. Research from WorkOS ( “Why Most Enterprise AI Projects Fail – and the Patterns That Actually Work”) identifies consistent failure patterns across enterprise AI implementations, most notably the absence of: A clearly defined operational framework Documented and standardized workflows Ownership and accountability across teams Reliable, high-quality data management practices Alignment between business objectives and technical execution When these elements are missing, the downstream impact is predictable. Teams struggle with unclear roles and responsibilities, leading to gaps in execution and stalled initiatives. Data becomes fragmented or inconsistent, limiting the effectiveness of AI models and eroding trust in outputs. At the same time, processes themselves are often incomplete, with missing steps or undocumented variations that introduce ambiguity into otherwise critical workflows. These are not isolated issues; they are common conditions in many organizations. The implication for executive leaders is clear: AI does not fail in isolation. It fails in environments that are not prepared to support it. Automation Doesn’t Fix Inefficiency, It Scales It One of the most persistent misconceptions surrounding AI is that it can serve as a corrective mechanism for operational inefficiencies. The reality is quite the opposite. AI is inherently dependent on the systems, processes, and data it is built upon. It does not independently diagnose and repair flawed workflows: it executes within them. When organizations automate without first improving their processes, several outcomes tend to emerge: Errors occur faster and more frequently because flawed logic is executed at scale. Operational complexity increases , as automation layers are added on top of already fragmented systems. Root causes become harder to identify , as issues are embedded within automated workflows. Costs rise over time, as organizations invest more resources into fixing problems that were never addressed at the source. In effect, automation acts as a multiplier . If the underlying process is efficient, AI amplifies that efficiency and likewise, if the process is broken, AI accelerates the breakdown. This is why organizations that skip process improvement often find themselves revisiting it later, at a significantly higher cost and with greater disruption. The Strategic Role of Process Improvement For executive leaders, process improvement should not be viewed as a prerequisite checkbox before AI adoption. It should be recognized as a strategic capability that directly enables the success of AI initiatives. Process improvement provides the structure, clarity, and discipline required for AI to deliver meaningful outcomes. At a deeper level, it contributes to several critical areas: Process Clarity and Standardization When workflows are clearly defined and standardized, AI systems can operate with consistency and predictability. This reduces variability and ensures that automation aligns with intended outcomes. Data Readiness and Quality High-quality data is foundational to any AI initiative. Process improvement ensures that data is captured, managed, and maintained in a way that supports accurate analysis and decision-making. Poor data quality can introduce risk at every stage, from model training to execution. Identification of High-Value Opportunities Through process analysis, organizations can identify bottlenecks, inefficiencies, and cost drivers. This insight allows executives to prioritize AI investment where they will deliver the greatest return and target high-impact use cases. Organizational Alignment and Accountability Clear processes define roles, responsibilities, and ownership. This alignment is essential for successful AI implementation, particularly in cross-functional environments. Without it, initiatives often stall due to confusion or lack of coordination. Measurable and Sustainable Outcomes Process improvement enables organizations to establish process definition and baseline performance metrics for their execution . This makes it possible to measure the impact of AI initiatives and ensure that improvements are sustained over time. In this sense, process improvement is not separate from AI strategy; it is integral to it. A More Disciplined Path to AI Adoption For organizations seeking to move forward with AI in a meaningful and sustainable way, a more structured approach is required. This approach prioritizes readiness over speed and alignment over experimentation. Step 1: Build Strategic Awareness Executives should begin by understanding the potential applications of AI within their organizations. This includes identifying areas where automation could enhance efficiency, improve customer experience, or reduce operational costs. However, this stage should remain exploratory, not reactive. Step 2: Establish Operational Readiness Before any technology is implemented, organizations must assess and improve their processes. This includes mapping workflows, identifying inefficiencies, addressing data quality issues, and standardizing operations. This step is often the most time-intensive, but also the most critical. Step 3: Align AI with Business Objectives With a solid operational foundation in place, organizations can begin to define how AI will integrate into their workflows. This involves selecting appropriate use cases, evaluating technology options, and ensuring alignment with strategic goals. At this stage, AI becomes a targeted solution, not a broad initiative. Step 4: Execute with Governance and Discipline Successful AI implementation requires more than technical deployment. It requires governance frameworks, data security protocols, change management strategies, and ongoing performance monitoring. Execution must be deliberate, structured, and aligned with long-term objectives. The Executive Imperative AI is often positioned as a competitive differentiator, and in many cases, it is. But its effectiveness is entirely dependent on the environment in which it is deployed. What we see across industries is not a failure of AI as a concept, but a failure of organizations to prepare for it properly. The tendency to prioritize speed over readiness is understandable. Market pressures are real, and the pace of technological change is accelerating. But for executive leaders, the responsibility is not to move fastest, it is to more correctly. That means: Recognizing that process improvement is not optional. Investing in operational and data readiness before automation. Aligning AI initiatives with clearly defined business outcomes. Building a foundation that can support long-term scalability. Because ultimately, AI will not transform a business on its own. Again, it will only enhance what already exists. And for organizations that take the time to build the right foundation, that enhancement can be transformative. For organizations serious about realizing the full value of AI, operational readiness cannot be overlooked. At Brewster, we partner with executive teams to bridge the gap between ambition and execution, ensuring that process, data, and strategy are aligned before automation is introduced. If this is a priority for your business, we welcome the opportunity to start that conversation .